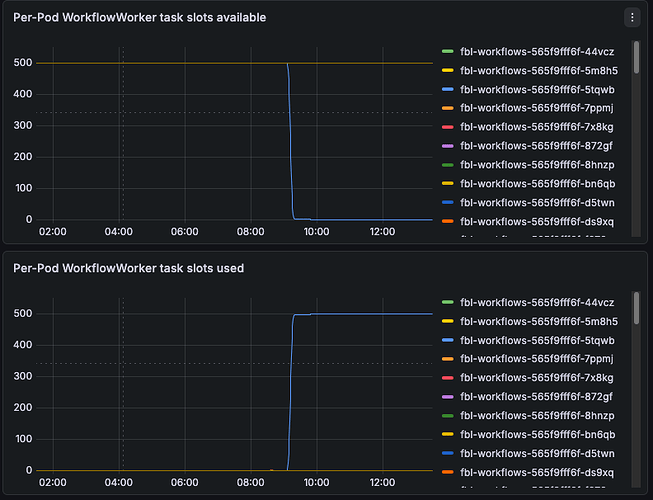

@tihomir We have found two kinds of potential deadlocks, Do you think that those could be related?

Traceback (most recent call last):

File "/usr/local/lib/python3.11/typing.py", line 385, in <genexpr>

ev_args = tuple(_eval_type(a, globalns, localns, recursive_guard) for a in t.__args__)

File "/usr/local/lib/python3.11/typing.py", line 385, in _eval_type

ev_args = tuple(_eval_type(a, globalns, localns, recursive_guard) for a in t.__args__)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/typing.py", line 2336, in get_type_hints

value = _eval_type(value, base_globals, base_locals)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/converter.py", line 1632, in value_to_type

field_hints = get_type_hints(hint)

^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/converter.py", line 594, in from_payload

obj = value_to_type(type_hint, obj, self._custom_type_converters)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/converter.py", line 311, in from_payloads

values.append(converter.from_payload(payload, type_hint))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 1990, in _convert_payloads

return self._payload_converter.from_payloads(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 1025, in _make_workflow_input

args = self._convert_payloads(init_job.arguments, arg_types)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 405, in activate

self._workflow_input = self._make_workflow_input(start_job)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow.py", line 683, in activate

return self.instance.activate(act)

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/concurrent/futures/thread.py", line 58, in run

result = self.fn(*self.args, **self.kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/concurrent/futures/thread.py", line 83, in _worker

work_item.run()

File "/usr/local/lib/python3.11/threading.py", line 975, in run

self._target(*self._args, **self._kwargs)

File "/usr/local/lib/python3.11/threading.py", line 1038, in _bootstrap_inner

self.run()

File "/usr/local/lib/python3.11/threading.py", line 995, in _bootstrap

self._bootstrap_inner()

File "/usr/local/lib/python3.11/site-packages/debugpy/_vendored/pydevd/_pydev_bundle/pydev_monkey.py", line 1134, in __call__

ret = self.original_func(*self.args, **self.kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

temporalio.worker._workflow._DeadlockError: [TMPRL1101] Potential deadlock detected: workflow didn't yield within 2 second(s).

level: ERROR

message: Failed handling activation on workflow with run ID 019bb80a-1d5d-763f-a735-325c62fabd6f

name: temporalio.worker._workflow

timestamp: 2026-01-13T15:47:11Z

Traceback (most recent call last):

File "/usr/local/lib/python3.11/_weakrefset.py", line 39, in _remove

def _remove(item, selfref=ref(self)):

File "/usr/local/lib/python3.11/site-packages/dataclasses_json/core.py", line 362, in _decode_items

return list(_decode_item(type_args, x) for x in xs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/dataclasses_json/core.py", line 288, in _decode_generic

xs = _decode_items(_get_type_arg_param(type_, 0), value, infer_missing)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/dataclasses_json/core.py", line 219, in _decode_dataclass

init_kwargs[field.name] = _decode_generic(field_type,

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/dataclasses_json/api.py", line 70, in from_dict

return _decode_dataclass(cls, kvs, infer_missing)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/source/fbl-workflows/src/voltron/fbls/explanation_pipeline/workflows/explanation_hold.py", line 358, in get_forensics_metadata

return RetrieveForensicsMetadataOutput.from_dict(result)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/source/fbl-workflows/src/voltron/fbls/explanation_pipeline/workflows/explanation_hold.py", line 382, in run_add_workflow

buffer_result: Optional[RetrieveForensicsMetadataOutput] = await self.get_forensics_metadata(origin, source, theater)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/source/fbl-workflows/src/voltron/fbls/explanation_pipeline/workflows/explanation_hold.py", line 333, in process_forensics

result.append(await self.run_add_workflow(ThreatType.EMAIL, process_forensics))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/source/fbl-workflows/src/voltron/fbls/explanation_pipeline/workflows/explanation_hold.py", line 256, in run

jobs.extend([x for x in await self.process_forensics(forensics) if x])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 2578, in execute_workflow

return await input.run_fn(*args)

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 986, in run_workflow

result = await self._inbound.execute_workflow(input)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 2194, in _run_top_level_workflow_function

await coro

File "/usr/local/lib/python3.11/asyncio/events.py", line 80, in _run

self._context.run(self._callback, *self._args)

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 2171, in _run_once

handle._run()

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow_instance.py", line 418, in activate

self._run_once(check_conditions=index == 1 or index == 2)

File "/usr/local/lib/python3.11/site-packages/temporalio/worker/_workflow.py", line 683, in activate

return self.instance.activate(act)

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/concurrent/futures/thread.py", line 58, in run

result = self.fn(*self.args, **self.kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/concurrent/futures/thread.py", line 83, in _worker

work_item.run()

File "/usr/local/lib/python3.11/threading.py", line 975, in run

self._target(*self._args, **self._kwargs)

File "/usr/local/lib/python3.11/threading.py", line 1038, in _bootstrap_inner

self.run()

File "/usr/local/lib/python3.11/threading.py", line 995, in _bootstrap

self._bootstrap_inner()

File "/usr/local/lib/python3.11/site-packages/debugpy/_vendored/pydevd/_pydev_bundle/pydev_monkey.py", line 1134, in __call__

ret = self.original_func(*self.args, **self.kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

temporalio.worker._workflow._DeadlockError: [TMPRL1101] Potential deadlock detected: workflow didn't yield within 2 second(s).

level: ERROR

message: Failed handling activation on workflow with run ID 3b002ea5-1cb0-4cba-b14f-a91afbb8b2e5

name: temporalio.worker._workflow

timestamp: 2026-01-13T14:36:45Z